Most CDPs predict purchase likelihood with a single model. Two-stage hurdle models fix that — and unlock sharper segmentation for SEA brands.

Most purchase prediction models inside CDPs are quietly lying to your activation team. Not through bad intent — through bad architecture. When a single model tries to predict both whether a customer will buy and how much they’ll spend, it collapses two fundamentally different statistical problems into one output. The result is a propensity score that looks clean in the dashboard and underperforms in the campaign.

The Zero-Inflation Problem Your CDP Vendor Won’t Mention

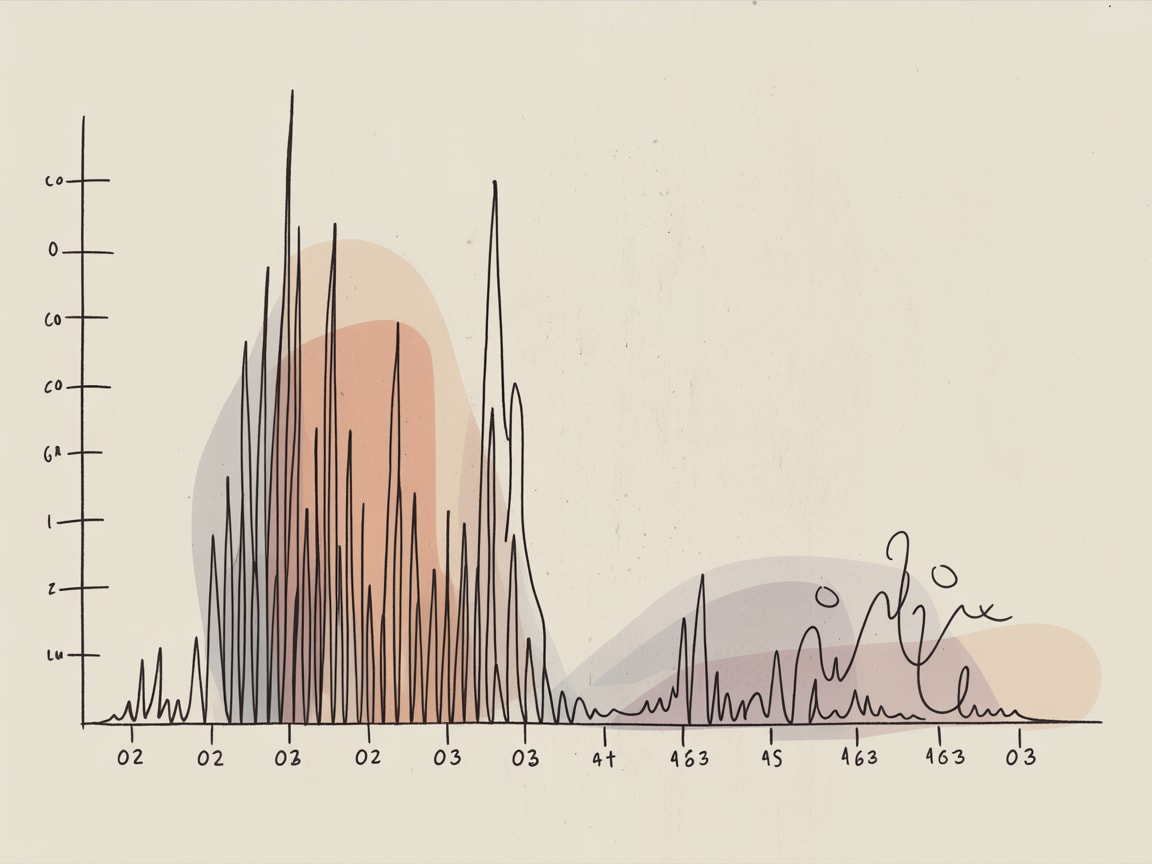

In any given 30-day window, the majority of customers in a typical SEA e-commerce dataset register zero transactions. On Shopee or Lazada, that spike at zero is dramatic — seasonal purchase cycles, deal-seeking behaviour, and high browse-to-buy ratios mean most users are inactive most of the time. Towards Data Science contributor Derek Tran describes this as a “zero-inflated” outcome: a distribution so skewed toward zero that standard regression models bend themselves out of shape trying to accommodate it.

The practical consequence is model compromise. A regression trained on zero-inflated data will systematically underestimate the spend of genuine high-value customers while over-attributing purchase likelihood to casual browsers. Your “high propensity” segment becomes a mixed bag of real intent and statistical noise.

The architectural fix is a two-stage hurdle model: Stage 1 is a binary classifier that answers only one question — will this customer transact at all? Stage 2 is a regression model that runs exclusively on customers who cleared that first hurdle, predicting spend magnitude among those already confirmed as likely buyers. Two jobs, two models, one honest output.

Building the Two-Stage Architecture Inside Your CDP

Implementing this inside a modern CDP — whether you’re on Segment, mParticle, or a composable stack with dbt and BigQuery — requires a deliberate feature engineering layer upstream of your model training pipeline.

For Stage 1, your binary classifier needs behavioural signals that indicate intent to transact: session frequency, cart additions, voucher engagement, category browse depth. In LINE-heavy markets like Thailand, messaging open rates tied to promotional content are a strong leading indicator. For Stage 2, you shift feature sets entirely — historical average order value, product category preferences, payment method (instalment users tend toward higher basket sizes), and recency of last high-value transaction.

The critical implementation step most teams skip: the two models should share no training label. Stage 1 is trained on all customers with a binary 0/1 purchase flag. Stage 2 is trained only on the subset who purchased, with actual spend as the target variable. Contaminating Stage 2 with non-purchasers reintroduces the zero-inflation problem you were solving for.

Timeline reality: expect two to three sprints to instrument, train, and validate both stages if your feature store is already in reasonable shape. If you’re still doing feature engineering ad hoc in notebooks, double that.

Activation: What Changes Downstream

The strategic value of this architecture isn’t the model accuracy improvement — it’s the segmentation logic it enables. You now have four operationally distinct customer states: low conversion likelihood / low predicted spend (suppress or nurture cheaply), low conversion likelihood / high predicted spend (re-engagement priority — these are lapsed high-value customers), high conversion likelihood / low predicted spend (conversion-focused creative, voucher-light), and high conversion likelihood / high predicted spend (your VIP activation tier — treat them accordingly).

Grab’s loyalty and retention teams operate with similar segmentation logic, separating frequency signals from value signals before determining intervention type. The principle holds whether you’re running a super-app or a single-category retailer: conflating conversion probability with spend potential produces campaigns optimised for the wrong objective.

For media activation, this architecture maps cleanly to Meta’s Advantage+ audience inputs and Google’s customer match tiers — feeding each segment the signal that matches its actual commercial profile, rather than a blended propensity score that means different things for different customer archetypes.

Embedding Models as the Connective Tissue

One emerging capability worth tracking: Google’s Gemini Embeddings 2, covered recently by Towards Data Science, positions itself as a unified embedding model capable of handling text, behavioural sequences, and structured data in a shared vector space. For CDP architecture, the implication is material — if you can embed product interaction sequences, customer service transcripts, and transactional history into a common representation, your feature engineering layer for Stage 1 and Stage 2 models becomes significantly richer without the brittle hand-crafted feature pipelines most teams currently maintain.

This is early-stage for most SEA marketing stacks, but the trajectory is clear: the gap between raw behavioural data and model-ready features is narrowing. Teams that invest in clean event schemas and composable data infrastructure now will be positioned to absorb these capabilities without a full rebuild.

Key Takeaways

- Split purchase prediction into two dedicated models — binary conversion classifier first, spend regression second — to eliminate the zero-inflation distortion endemic to SEA e-commerce datasets.

- Map the four resulting customer quadrants (conversion likelihood × spend potential) directly to distinct activation strategies and media inputs, not a single ranked propensity list.

- Invest in a clean, versioned feature store now; emerging embedding models will compound the value of well-structured behavioural data significantly over the next 12 months.

The broader question this raises: how many other places in your CDP architecture are single models quietly doing two jobs? Purchase prediction is the obvious one, but churn propensity models face the same structural problem — conflating when someone will churn with how recoverable they are. That’s a separate two-stage problem worth examining once you’ve validated the purchase model split.

At grzzly, we spend a lot of time inside CDP architectures — figuring out where the model design, the feature engineering, and the activation logic have drifted out of alignment. If your propensity scores aren’t translating into campaign performance, the issue is usually upstream of the campaign itself. Let’s talk

Sources

Written by

Velvet GrizzlyArchitecting the unified customer profile — stitching together behavioural, transactional, and declared data into platforms that actually earn their licence fee.