Most engagement platforms misread silent customers as disengaged. Two-stage hurdle models fix that — and change who you target and when.

Most customer engagement platforms are solving the wrong problem. They’re trained to predict how much someone will engage — how many sessions, how many purchases, how often they open a push notification. But for a significant slice of any SEA brand’s database, the real question isn’t magnitude. It’s binary: will this person do anything at all?

When you force a single model to answer both questions simultaneously, you get a prediction surface that’s quietly wrong in ways that won’t show up in your model accuracy metrics — but will absolutely show up in your campaign ROI.

The Zero-Inflation Problem Nobody’s Talking About

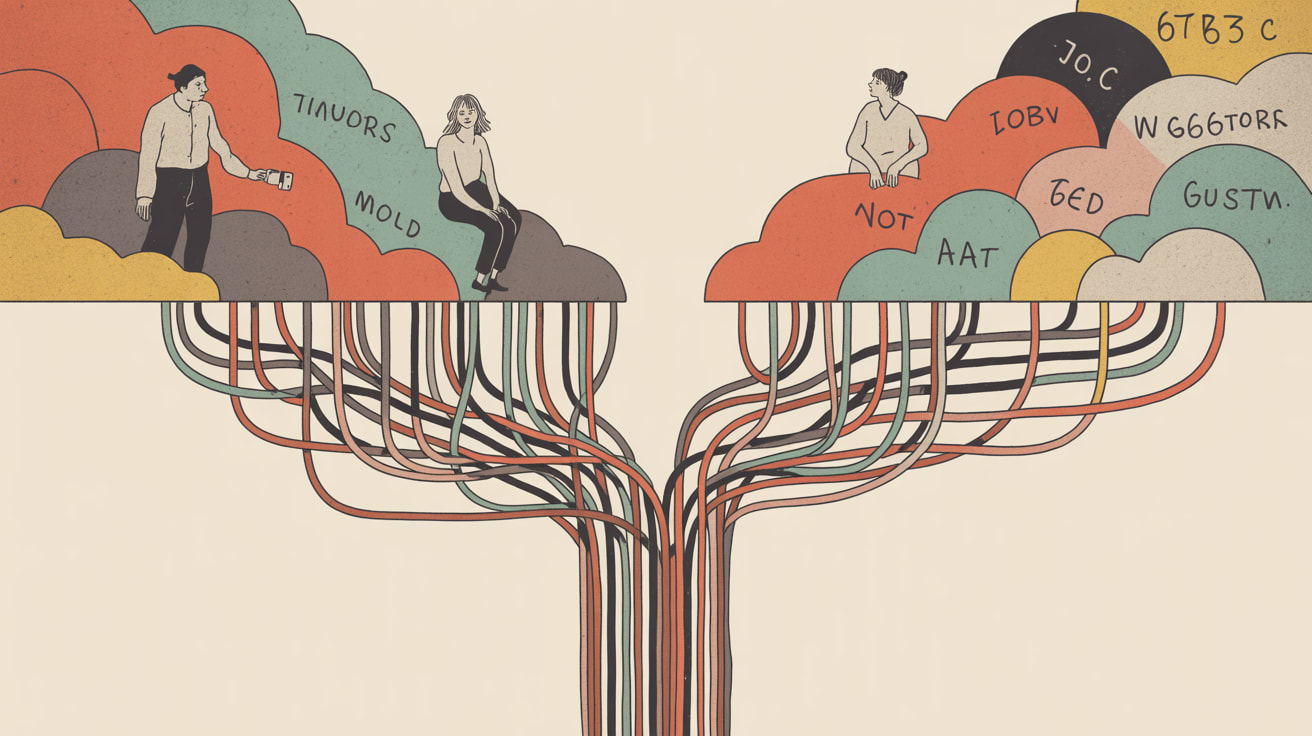

Towards Data Science recently covered two-stage hurdle models in the context of zero-inflated outcomes — datasets where a large proportion of observations record exactly zero activity. The insight is deceptively simple: one model can’t do two jobs. A standard regression or scoring model trained on mixed populations will systematically overestimate engagement likelihood for dormant segments and underestimate intensity for active ones, because it’s trying to average across two fundamentally different behavioural states.

In practice, this plays out as re-engagement campaigns sent to customers who have structurally disengaged — not dormant, but done — while genuinely high-propensity users get deprioritised because their predicted score sits in the middle of a distorted distribution. On Shopee or Lazada, where margin on re-engagement is thin and notification fatigue is a real retention risk, the cost of that misclassification compounds fast.

The fix is architectural, not cosmetic: split your prediction into two explicit stages. Stage one asks whether a customer will cross the zero threshold at all — a classification problem. Stage two, applied only to those predicted to engage, asks how much — a regression problem. The models are separate. The logic is sequential. The outputs are honest.

Implementing Two-Stage Scoring Inside a CEP

The implementation challenge isn’t statistical — most modern data science teams can build a hurdle model in a sprint. The harder problem is plumbing it into your engagement stack in a way that actually changes campaign behaviour.

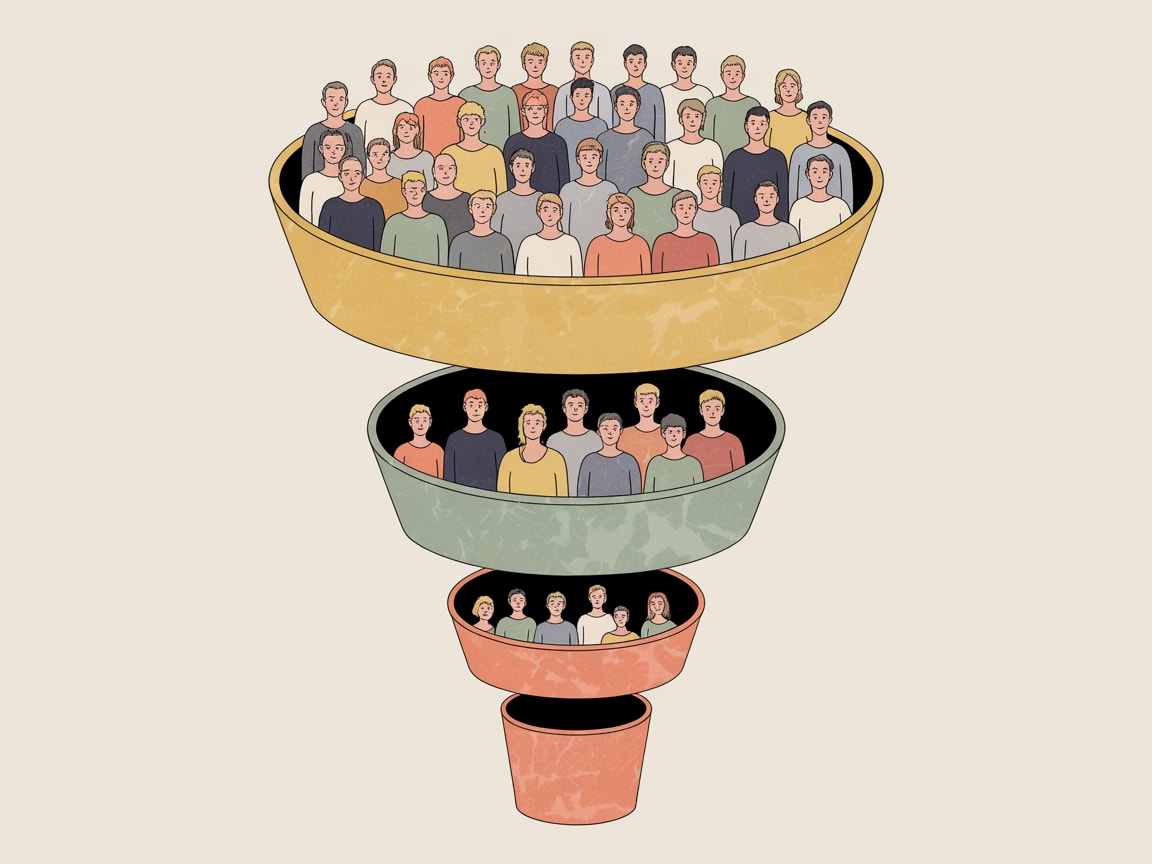

Here’s what that looks like concretely: your Stage 1 classifier runs during your nightly audience refresh, tagging each customer with an engagement-likelihood flag (binary, or a probability threshold — typically 0.3–0.4 works well for SEA e-commerce populations with high seasonal variance). Stage 2 scores are computed only for the subset above that threshold, predicting purchase value, session depth, or whatever outcome metric your campaign optimises against.

The critical implementation detail: these two outputs should feed separate decision rules in your CEP, not be multiplied together into a single composite score. Multiplying them re-introduces the averaging problem you just solved. In Braze, Insider, or MoEngage, this means building two distinct audience segments before any campaign logic is applied — not one segment with a blended score filter.

For teams managing multilingual audiences across markets like Thailand, Vietnam, and the Philippines, there’s an added layer: zero-inflation rates vary significantly by market and language cohort. A model trained on aggregated regional data will inherit those biases. Segment your Stage 1 training data by market, at minimum.

When Agents Are Scoring Your Customers, Accountability Gets Blurry

This is where the architectural concern gets sharper. As more CEP platforms introduce agentic orchestration — automated agents that trigger other agents to build audiences, adjust bids, or suppress contacts — the two-stage logic described above becomes a governance problem, not just a modelling one.

Barr Moses at Monte Carlo Data published a sharp piece on exactly this dynamic: when agents manage agents, blame disappears but consequences don’t. She describes a scenario where a misconfigured memory store, left unmonitored for three weeks, propagates silent errors through an entire orchestration chain. Nobody is paged. The system keeps running. The outputs look plausible.

Applied to CEP: if your Stage 1 classifier is being refreshed by an automated pipeline, and that pipeline pulls from a feature store that was quietly reconfigured during a platform migration, your engagement-likelihood scores are now wrong in ways that look like normal variance. You won’t see it in dashboard metrics until suppression lists have been incorrectly rebuilt or re-engagement budgets have been misallocated for weeks.

The mitigation isn’t to avoid automation — it’s to build explicit observability checkpoints at the boundary between Stage 1 and Stage 2 outputs. Monitor the distribution of your Stage 1 classification scores over time, not just model accuracy on holdout sets. A sudden shift in the proportion of customers flagged as engagement-eligible is a leading indicator of upstream data drift, not a strategic insight about your audience.

Embeddings and the Next Frontier of Behavioural Similarity

One emerging capability that makes two-stage modelling significantly more powerful: dense vector embeddings of customer behaviour sequences. Google’s Gemini Embeddings 2, previewed last week, represents the state of the art in capturing semantic similarity across heterogeneous input types — and that capability translates directly to customer data if you’re encoding behavioural sequences, product interaction histories, or content consumption patterns as embeddings rather than sparse feature vectors.

The practical application for Stage 1 classification: instead of engineering explicit recency-frequency-monetary features and hoping they capture behavioural similarity, you can embed the full sequence of a customer’s interactions and use cosine similarity to the centroid of your known-engaged population as a direct input to your hurdle model. Brands like Grab and regional super-apps are already experimenting with this approach for session-level personalisation. For mid-market brands without a dedicated ML team, the more realistic near-term play is using embedding-powered similarity to enrich your feature set rather than replace it — still meaningful signal, lower infrastructure lift.

Three things to act on this week:

- Audit your current propensity scores for zero-inflation bias: pull the distribution of your engagement likelihood scores and check whether dormant customers are clustering in the 0.2–0.4 range rather than near zero. If they are, your model is hedging.

- Build a Stage 1 / Stage 2 split into your next campaign brief — even as a manual audience segmentation exercise before you rebuild the model architecture. The discipline of separating “will engage” from “how much” changes targeting decisions immediately.

- Instrument your feature pipelines with distribution monitoring before you hand scoring logic to any automated orchestration layer. Silent data drift is the most expensive bug in CEP.

The deeper question worth sitting with: as engagement infrastructure becomes more automated and more agentic, are we building systems that are genuinely smarter about human behaviour — or systems that are confidently wrong at scale, with nobody watching the gap?

At Grzzly, we work with growth and CRM teams across SEA to design CEP frameworks that are architecturally honest about what their data can and can’t predict. If your engagement scoring feels like it’s optimising for the wrong population, that’s usually a modelling structure problem — and it’s fixable. Let’s talk at grzz.ly.

Sources

Written by

Brooding GrizzlyDesigning CEP frameworks that move beyond batch-and-blast into real-time, context-aware engagement — across channels, devices, and the messiness of actual human behaviour.