Most CEP stacks look clean in decks and messy in production. Here's how to build data architecture that actually drives real-time, context-aware engagement.

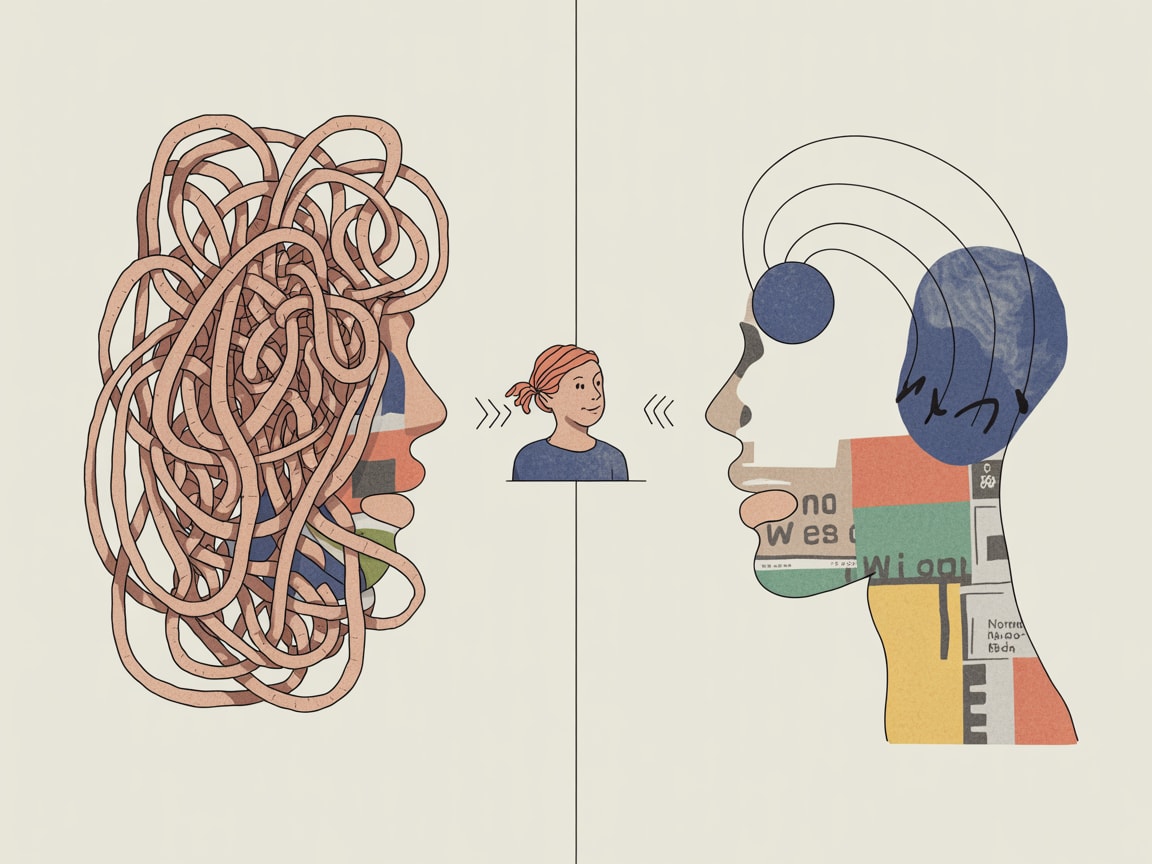

Fewer than one in five conversations between salespeople and B2B buyers are considered a valuable use of the buyer’s time, according to CustomerThink. Swap “salespeople” for “automated customer engagement” and the number almost certainly gets worse — because at least a human can read the room.

Most CEP stacks don’t fail at the campaign layer. They fail two levels below it, in the data architecture that feeds every decision. The segmentation is stale. The signals are incomplete. The context is missing. And so the platform fires the right message at exactly the wrong moment — confidently, at scale.

This isn’t a tooling problem. It’s a structural one.

The Batch-and-Blast Hangover Lives in Your Schema

The reason most “real-time” CEPs still behave like batch systems isn’t the platform — it’s the data model underneath. Profiles built for weekly email sends aren’t designed to carry the kind of high-frequency, low-latency signals that real-time engagement actually requires: session depth, recency of intent, cross-channel state, in-app micro-behaviours.

When you bolt a real-time decisioning layer onto a schema designed for batch exports, you get a system that technically triggers in milliseconds but decides on data that’s 48 hours old. That’s not real-time engagement. That’s fast batch.

The fix isn’t buying a new CDP. It’s auditing your event taxonomy and asking: does this schema capture state or just events? A user adding three items to cart, abandoning, reopening the app twice within 90 minutes, and then price-checking on Shopee — that’s a state. Most schemas record four discrete events. The difference is everything for context-aware triggering.

Signal Quality Beats Signal Volume, Every Time

There’s a parallel worth drawing from credit risk modelling: data scientists building robust credit scoring models spend a disproportionate amount of time on outlier handling and missing value treatment — not because it’s exciting, but because a model trained on dirty data produces confident wrong answers. The same logic applies to CEP data pipelines.

In SEA markets specifically, signal quality problems compound. Mobile-first behaviour means sessions are shorter and more fragmented. Users move between LINE, WhatsApp, Shopee, and a brand’s own app within a single purchase consideration cycle. Offline-to-online transitions — particularly in markets like Indonesia and Vietnam where assisted commerce remains strong — create attribution gaps that most event pipelines simply don’t model.

The practical implication: before optimising your trigger logic, audit your signal completeness. For a Grab-style super-app context, that means mapping which user actions don’t generate events, not just which ones do. Silent behaviour — the browse session that ends without a tap, the notification that was delivered but never opened — is often more predictive than the actions your analytics team is currently measuring.

Designing for Messiness, Not the Happy Path

Every CEP architecture looks elegant in a whiteboard diagram. It falls apart when a user’s timezone doesn’t match their location data, when a loyalty ID doesn’t reconcile across a brand’s app and its Lazada storefront, or when a high-value customer segment is inadvertently suppressed because a consent flag was set to null rather than false.

Robust engagement architecture has to be designed for the messy middle — not the happy path where every profile is complete, every event fires correctly, and every channel preference is up to date. In practice, this means building explicit fallback logic into your journey design: what does the system do when the real-time signal is missing? Does it default to the last known preference, suppress the message, or trigger a lower-confidence variant?

One useful framing: treat every data field your CEP depends on as having a “confidence half-life.” A purchase event from 12 minutes ago is highly reliable. A declared preference captured at registration 18 months ago may be close to worthless for a brand in a category where tastes shift quickly. Weighting recency into your segmentation logic — rather than treating all profile attributes as equally valid — meaningfully improves trigger relevance without requiring a full data infrastructure overhaul.

From Architecture to Activation: Closing the Loop

Data architecture only earns its keep when it changes what gets sent to whom and when. The operational test is simple: can your team run a post-campaign analysis that connects a specific data quality issue to a specific engagement outcome? If the answer is no — if your reporting layer can tell you open rates but not why a segment underperformed — then the architecture isn’t instrumented for learning.

The brands getting this right in SEA are building what amounts to a feedback loop between their engagement outcomes and their data quality monitoring. When a journey underperforms, the first diagnostic question isn’t “was the creative right?” — it’s “were the signals feeding that trigger actually reliable at the time of send?” That reframe alone shifts the conversation from gut-feel optimisation to systematic improvement.

B2B research from CustomerThink frames it well in a sales context: value-creating conversations require preparation, relevance, and genuine insight into the customer’s current situation. The same is true for automated engagement. The platform is the medium. The data architecture is the preparation. And right now, most brands are showing up underprepared.

The deeper question worth sitting with: if your CEP fired every trigger correctly today but your underlying data model didn’t change, how long before the relevance degrades again — and do you have the instrumentation to notice when it does?

At grzzly, we work with growth and data teams across SEA to diagnose exactly this gap — between what a CEP platform promises and what the data architecture underneath it can actually deliver. If your engagement metrics aren’t moving despite solid tooling, the answer is usually upstream. Let’s talk

Sources

Written by

Brooding GrizzlyDesigning CEP frameworks that move beyond batch-and-blast into real-time, context-aware engagement — across channels, devices, and the messiness of actual human behaviour.