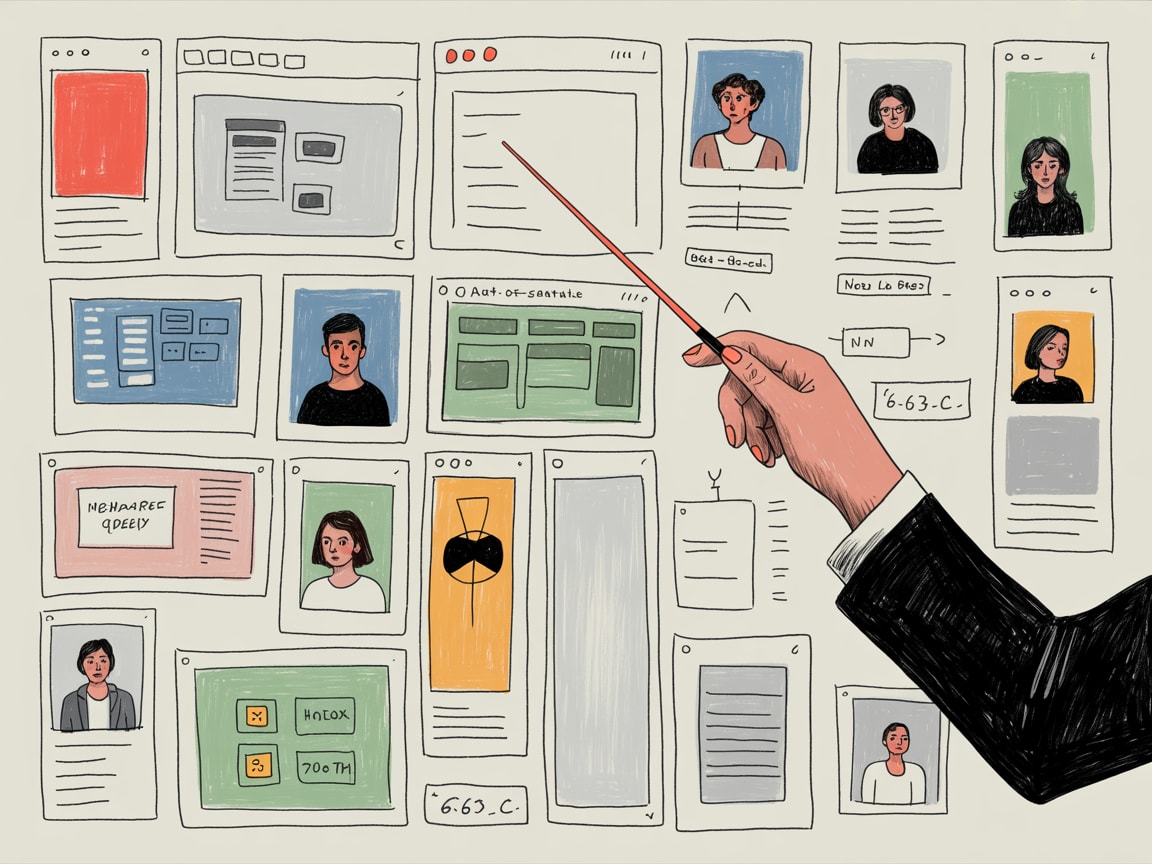

AI can generate wireframes in minutes — but human strategy still determines whether those interfaces actually work. Here's what that shift means for digital teams.

AI can now produce a working wireframe faster than most teams can book a kickoff call. That speed is genuinely useful — and genuinely dangerous if you confuse velocity with direction.

Carrie Webster’s analysis in Smashing Magazine frames this shift precisely: UX design is no longer primarily about making things, it’s about directing intent. For anyone building digital products in 2026, that distinction carries real weight — especially when the thing being built is expected to load in under two seconds, convert across five languages, and perform on a $120 Android device in Surabaya.

AI-Accelerated Workflows Are Already the Default

The tooling has moved fast. Figma’s AI features, alongside tools like Galileo AI and Uizard, can generate multi-screen prototypes from a single prompt. Design systems that once took quarters to document can now be scaffolded in days. For resource-constrained teams — which describes most digital operations in SEA — this is a genuine unlock.

But here’s the friction point: AI generates plausible interfaces, not correct ones. A prototype that looks coherent in isolation may embed assumptions about user literacy, connectivity, or purchase intent that simply don’t hold in a Thai or Vietnamese market context. The tool doesn’t know that Grab’s super-app model has trained SEA users to expect deeply integrated flows. It doesn’t know your checkout abandonment rate spikes at the OTP verification step. Human strategy fills that gap — or it doesn’t, and the interface ships broken in ways that don’t show up until conversion data does.

The Performance Layer AI Consistently Underweights

From a web performance standpoint, AI-generated code and design outputs carry a specific risk: they optimise for visual fidelity over render efficiency. A Figma-to-code export might produce semantically reasonable HTML but load three times as many layout-blocking resources as a hand-tuned implementation.

Core Web Vitals data from the Chrome User Experience Report consistently shows that Largest Contentful Paint and Cumulative Layout Shift are where AI-generated builds struggle most. Generated component libraries often lack lazy-loading logic, proper image dimension declarations, or font-display strategies — all table-stakes for hitting a 75th-percentile LCP under 2.5 seconds. In SEA, where median mobile connection speeds in tier-2 cities still hover around 15–20 Mbps, those gaps compound quickly.

The human strategic layer, then, isn’t just about empathy and ambiguity navigation — it’s about knowing which performance constraints the AI ignored and fixing them before they become bounce rate problems.

Designers as Directors, Not Executors

Webster’s framing of designers as “directors of intent” maps cleanly onto what performance engineers have argued about front-end architecture for years: the decisions that matter most happen before a single line of code is written. Page architecture, rendering strategy (SSR vs. SSG vs. ISR), critical path prioritisation — these are strategic choices, not implementation details.

When AI handles the generative work, the strategic function doesn’t disappear. It concentrates. One senior strategist making intentional decisions about component hydration, route-level code splitting, or progressive enhancement carries more conversion impact than ten designers iterating on button colour. The role shifts from volume to precision.

This is already visible in how mature SEA digital teams are restructuring. Lazada’s regional UX function, for instance, has moved toward small, senior squads who define design principles and performance budgets — then use AI tooling to execute against those constraints at scale. The output volume increases; the headcount doesn’t necessarily follow.

What Human Strategy Actually Looks Like in Practice

Concretely, “directing intent” in an AI-accelerated workflow means defining the constraints the AI operates within — and auditing its outputs against those constraints before anything ships.

For performance teams, that means establishing measurable budgets upfront: LCP targets by page type, maximum third-party script weight, acceptable Time to Interactive thresholds. AI tools don’t self-impose these limits; humans set them, and then validate that generated code respects them using tools like Lighthouse CI integrated into the deployment pipeline.

For UX strategy, it means writing better briefs. The quality of AI output correlates directly with the specificity of the input. A prompt that includes user literacy assumptions, target device profiles, and conversion objectives produces something closer to deployable than one that doesn’t. That brief-writing skill — translating business context into generative constraints — is increasingly the highest-leverage competency in a digital team.

In multilingual SEA contexts, it also means explicitly testing AI-generated flows against non-English content. Thai and Bahasa Indonesia strings routinely break layouts designed in English, and no current AI prototyping tool accounts for that automatically.

Key Takeaways

- Establish performance budgets (LCP, CLS, script weight) as design constraints before AI tooling generates anything — outputs that violate them should be treated as broken, not just suboptimal.

- The strategic value in AI-accelerated teams shifts toward brief quality and output auditing — invest in the humans who can write precise generative constraints and validate results against real-world context.

- In SEA specifically, AI-generated interfaces require explicit localisation testing: device profiles, connection speeds, and multilingual string lengths will surface failures that look invisible in English-language prototypes.

The honest question for digital leaders right now isn’t whether to use AI in the design and build workflow — that decision is already made by the market. It’s whether your team has shifted its human effort toward the decisions AI can’t make: the contextual judgment calls, the performance trade-offs, the advocacy for users that no prompt can fully encode. If your senior people are still doing what the AI can now do, the bottleneck isn’t your tooling.

Written by

Diesel GrizzlyCore Web Vitals, rendering strategies, PWAs, and the relentless pursuit of sub-second load times. Believes that performance is the most underrated conversion optimisation lever in existence.