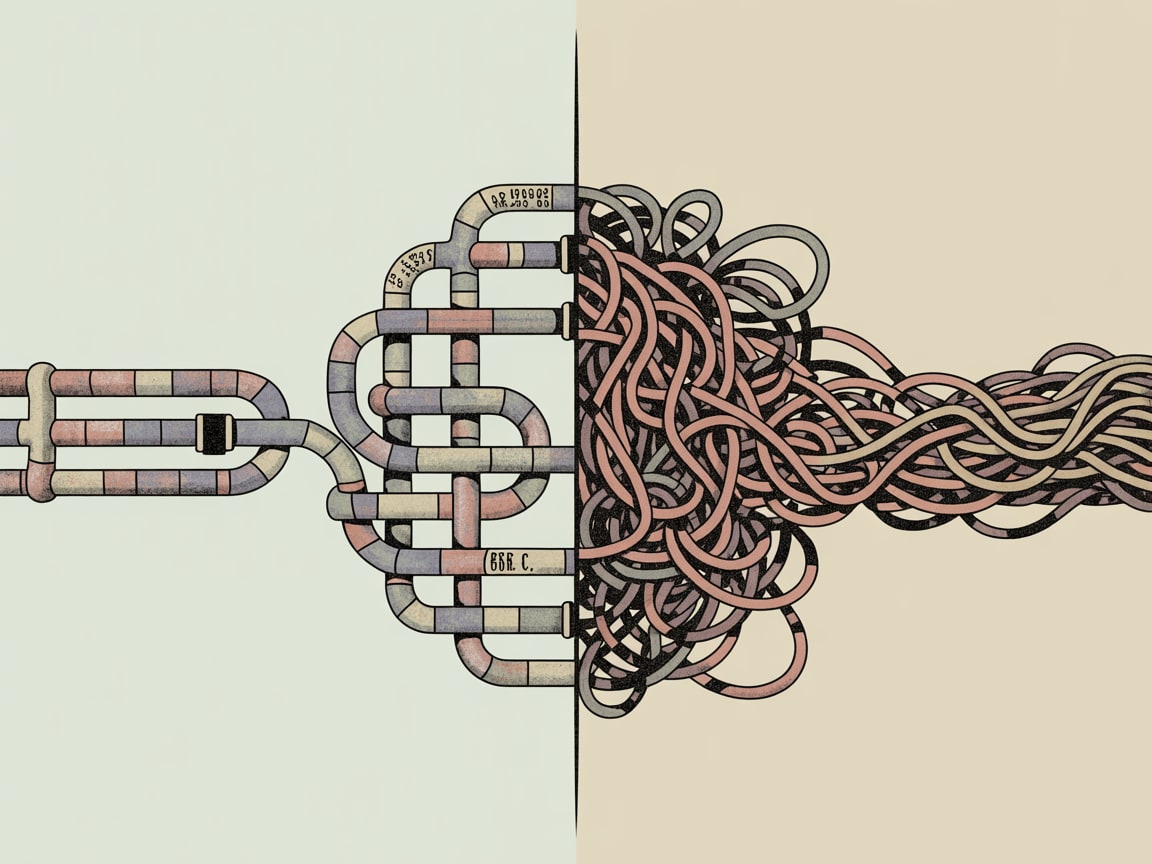

AI coding agents like Claude Code can ship production-ready code fast — but without solid data pipeline thinking, you're automating chaos. Here's what data teams need to know.

AI coding agents shipped faster than most data teams were ready for them. Claude Code — Anthropic’s terminal-native coding agent — can now take a natural language prompt and return production-ready Python, complete with error handling, tests, and documentation. That’s genuinely useful. It’s also, in the wrong hands, a very efficient way to build a pipeline that looks solid and falls apart the moment real data hits it.

What AI Coding Agents Actually Change for Data Engineers

Towards Data Science recently profiled Claude Code’s ability to generate robust, structured code from plain-language instructions — handling edge cases, writing unit tests, and producing documentation that a junior engineer might spend a day producing manually. For data teams, the productivity ceiling just moved.

But here’s what that framing misses: the quality of AI-generated pipeline code is almost entirely downstream of the quality of your architectural decisions. Feed Claude Code a vague prompt about ingesting user event data and you’ll get clean, functional code that solves the wrong problem elegantly. The agent doesn’t know your schema conflicts, your upstream SLA dependencies, or the fact that your Shopee seller data arrives three hours late every Tuesday. That context lives in your head — or ideally, in your architecture documentation — and no coding agent can substitute for it.

The practical shift is this: senior data engineers should now be spending less time writing boilerplate and more time on what they were always supposed to be doing — designing the right abstractions before a single line gets written.

Variable Discretization and the Underrated Art of Data Preparation

One of the quieter data engineering decisions that compounds over time is how you treat continuous variables before they enter modelling or reporting layers. Towards Data Science covered five discretization approaches recently — equal-width binning, equal-frequency binning, K-Means clustering, decision tree-based methods, and custom domain-driven cuts — and while this reads like a data science topic, it’s fundamentally a pipeline architecture question.

In practice, discretization choices made at the transformation layer have long downstream consequences. A Southeast Asian telco client binning ARPU into deciles for a churn model is making a decision that affects how that feature behaves across quarterly retraining cycles, how it renders in a BI dashboard, and whether a marketing analyst in Manila can actually interpret the output without a data scientist in the room.

The right discretization method depends on distribution shape, business semantics, and how the variable will be used downstream — not on what’s easiest to implement. Equal-width binning is seductive because it’s simple. It’s also likely to produce bins that mean nothing to a commercial team if the underlying distribution is skewed, which in SEA consumer datasets — where income and spending distributions are heavily right-tailed — it almost always is.

This is a decision that should live in your data contract documentation, not in an undocumented pandas cut() call buried in a notebook someone ran once in 2024.

The Lakehouse Model and Where AI-Assisted Code Fits In

The rise of lakehouse architectures — Delta Lake, Apache Iceberg, and their managed equivalents on AWS and GCP — has changed what “production-ready” even means for data pipelines. You’re no longer just writing code that runs. You’re writing code that participates in a versioned, schema-enforced, time-travel-capable data ecosystem.

This matters for how you use AI coding agents. Claude Code can generate a well-structured PySpark job. It cannot, without explicit instruction, generate one that respects your existing partition strategy, writes to the correct Iceberg table version, or handles schema evolution in a way that doesn’t silently break downstream consumers.

The practical implication: teams that have invested in clear data contracts and documented architecture patterns will extract dramatically more value from AI coding agents than those who haven’t. The agent becomes a force multiplier on good architectural thinking — and an accelerant on bad habits if that foundation isn’t there.

For SEA-based teams operating across multiple markets — ingesting from Lazada’s seller APIs, Grab’s transaction feeds, or LINE OA event streams — this is not an abstract concern. These sources have inconsistent schemas, variable latency, and market-specific quirks. A coding agent that doesn’t know the difference between a Thai baht transaction and a Indonesian rupiah one will produce code that compiles, tests clean, and produces subtly wrong revenue aggregations.

The Real Competitive Divide Is Architectural, Not Tooling

The organisations that will pull away from the pack over the next 18 months are not the ones with the most sophisticated AI tools. They’re the ones with the clearest data architecture — defined schemas, documented lineage, enforced data contracts — that allows them to use those tools without accumulating debt.

AI coding agents lower the cost of implementation dramatically. They do nothing to lower the cost of a bad architectural decision. If anything, they lower the time between a bad decision and a production incident.

The data teams worth benchmarking against right now are the ones treating AI-assisted development not as a replacement for design thinking, but as a reason to invest more heavily in it. Clearer abstractions. More explicit contracts. Better documentation of the assumptions baked into every transformation.

The plumbing has always been where intelligence either lives or drowns. That hasn’t changed — it’s just moving faster now.

Key Takeaways

- Before adopting AI coding agents in your data workflows, document your data contracts and architectural assumptions explicitly — the agent will only be as good as the context you give it.

- Discretization choices for continuous variables should be driven by downstream business semantics and distribution shape, not implementation convenience — especially in SEA markets with skewed consumer data.

- Lakehouse architectures require pipeline code that respects schema evolution and partition strategies; AI-generated code needs explicit architectural guardrails to participate safely in these ecosystems.

As AI coding agents commoditise the cost of writing pipeline code, the strategic question shifts from can we build this faster to do we know exactly what we should be building. How confident are you that your team’s architectural documentation is good enough to brief an AI agent as clearly as it would brief a new senior hire?

Sources

Written by

Chunky GrizzlyDesigning the foundational plumbing — data warehouses, lakehouse models, and ETL pipelines — that separates organisations with genuine intelligence from those drowning in dashboards.