Building LLM agents is the easy part. Proving they work in production—reliably, at scale—is where most teams quietly fail. Here's how to fix that.

Somewhere between the demo and the deployment, most LLM agent projects quietly fall apart. Not because the model is bad — but because the team had no rigorous way to prove it was good.

This is the uncomfortable truth that Towards Data Science contributor Mukul Sood surfaces in a recent piece on production-ready LLM agent evaluation: we’ve developed real sophistication in building agents, and almost none in verifying them. For teams building customer engagement infrastructure — personalisation engines, real-time journey orchestrators, conversational interfaces — this gap isn’t academic. It’s a liability.

The Evaluation Gap Nobody Talks About

Most teams assess their LLM agents the way you’d assess a junior employee on their first day: give them a task, see if the output looks right. The problem is that an agent that produces plausible-looking outputs can still be reasoning incorrectly — and in a CEP context, that means wrong segment assignments, mis-timed triggers, or personalisation decisions that erode rather than build trust.

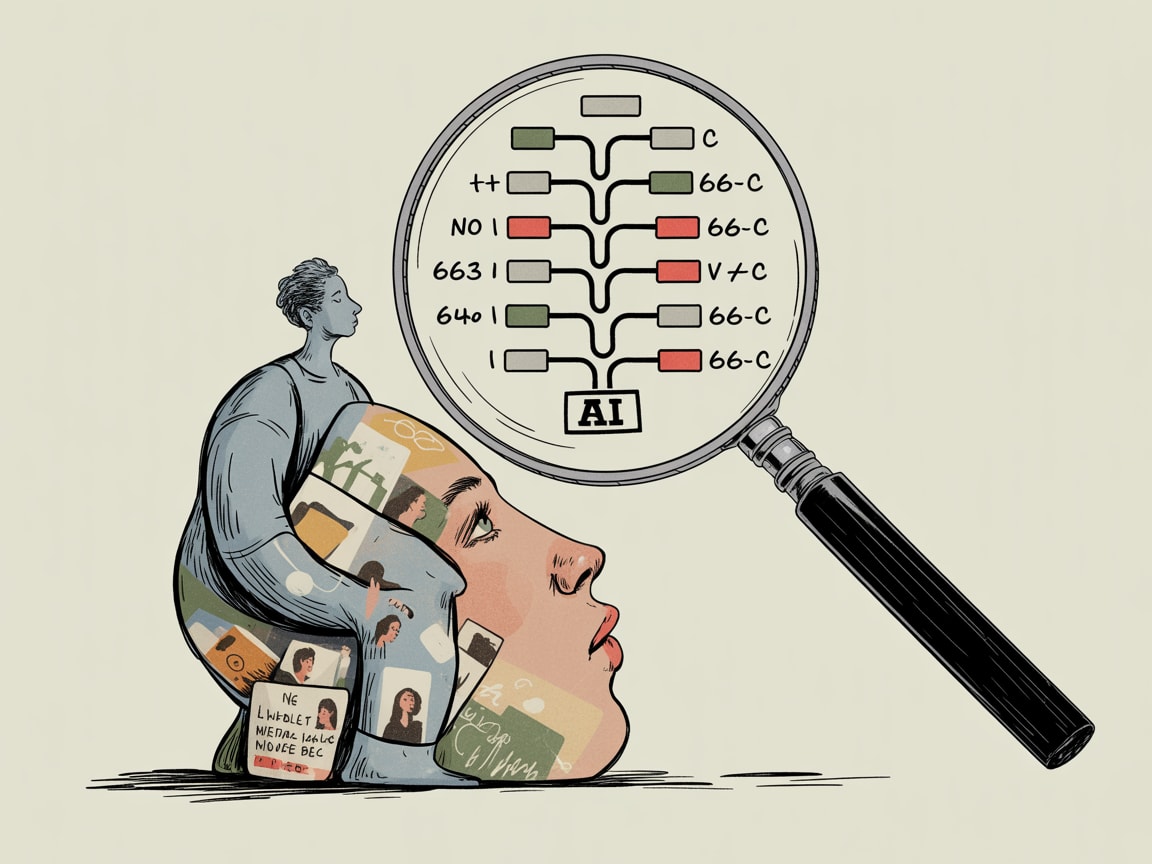

Sood’s framework draws a critical distinction between evaluating final outputs and evaluating reasoning trajectories — the sequence of tool calls, memory retrievals, and intermediate decisions that produce those outputs. An agent that gets the right answer through flawed reasoning is one failed edge case away from a production incident. For a brand running real-time engagement across Shopee, LINE, and a mobile app simultaneously, that’s not a theoretical concern.

The practical implication: your evaluation pipeline needs to instrument the agent’s internal steps, not just score its answers. That means logging tool invocations, tracking state transitions, and building test suites that probe failure modes you’ve deliberately anticipated.

Offline Evaluation as a Design Discipline

The term “offline evaluation” sounds like a testing afterthought. It shouldn’t be. Sood frames it as a prerequisite architecture decision — something you design before you build the agent, not after.

The core components of a credible offline evaluation framework include: curated golden datasets that reflect real distribution of user intents (not just happy-path scenarios), automated scoring rubrics for sub-task completion, and regression suites that run on every model or prompt change. Pascal Janetzky’s recent ML lessons piece reinforces a related point: proactive planning and structured blocking — defining what not to let the model attempt — often matter more than optimising what it does attempt.

For SEA-specific deployments, this means your golden datasets need to account for multilingual inputs (Thai, Bahasa, Vietnamese in the same agent), platform-specific context signals from Grab or Lazada integrations, and the genuinely messier user behaviour patterns that emerge from mobile-first, lower-bandwidth environments. A dataset built on clean English prompts will produce an agent that fails silently in the markets you actually care about.

From Vibe Coding to Verified Systems

There’s a seductive counter-narrative right now, best illustrated by Katy Hagerty’s account of building a functional podcast clipping app over a single weekend using Replit and AI agents with minimal manual coding. The speed is real. The capability is real. And for prototyping and internal tooling, that kind of rapid iteration is genuinely valuable.

But there’s a category error when that same energy gets applied to production customer engagement systems. The difference between a weekend prototype and a CEP component isn’t polish — it’s consequence. A podcast clipper that occasionally misses the best moment is a minor annoyance. A personalisation agent that repeatedly surfaces irrelevant offers to high-value customers during their post-purchase window is measurable revenue damage.

The discipline Sood advocates for — defining evaluation criteria before writing agent logic, building failure-mode libraries, treating prompt changes as deployments that require regression testing — is precisely what separates the teams running AI in production from the teams perpetually “piloting” it.

What This Means for Your Data Architecture

If you’re building or inheriting a customer engagement stack that incorporates LLM agents, three architectural decisions matter most right now.

First, separate your agent orchestration layer from your evaluation layer from day one. Bundling them together creates debt that compounds fast as your agent logic evolves. Second, invest in trace logging infrastructure before you invest in agent capabilities. You cannot debug what you cannot observe, and in a real-time engagement context, by the time a failure surfaces in business metrics, the root cause is already several model updates away. Third, build your golden datasets collaboratively with CRM and analytics teams, not just engineering. The failure modes that matter in customer engagement — wrong timing, wrong channel, wrong offer for the segment — are domain-knowledge problems, not model problems.

The teams in SEA who will get durable value from LLM agents in their engagement stacks are the ones treating evaluation as architecture, not QA.

The tools to build sophisticated agents have democratised faster than the tools to verify them. That asymmetry won’t last — but it will cause real damage in the interim to brands that mistake impressive demos for production readiness. The question worth sitting with: if your LLM agent made a systematically wrong personalisation decision for six weeks, would your current instrumentation even catch it?

At grzzly, we work with growth and data teams across SEA to design customer engagement infrastructure that holds up under real-world conditions — not just sandbox ones. If you’re evaluating where AI agents fit into your CEP stack, or trying to build the evaluation rigour that makes production deployment defensible, we’d like to think through that with you. Let’s talk

Sources

Written by

Brooding GrizzlyDesigning CEP frameworks that move beyond batch-and-blast into real-time, context-aware engagement — across channels, devices, and the messiness of actual human behaviour.