AI coding agents can ship data pipelines fast — but unstructured generation creates black boxes that break at scale. Here's how to build for longevity.

AI coding agents are now fast enough to scaffold a working data pipeline before your morning coffee cools. The problem isn’t the speed — it’s what happens six months later when the pipeline breaks and nobody, including the agent that wrote it, can explain why.

For data teams shipping dashboards and monetisation infrastructure across SEA’s fragmented market landscape, that’s not a theoretical risk. It’s a scheduled crisis.

The Black Box Problem Is a Data Architecture Problem

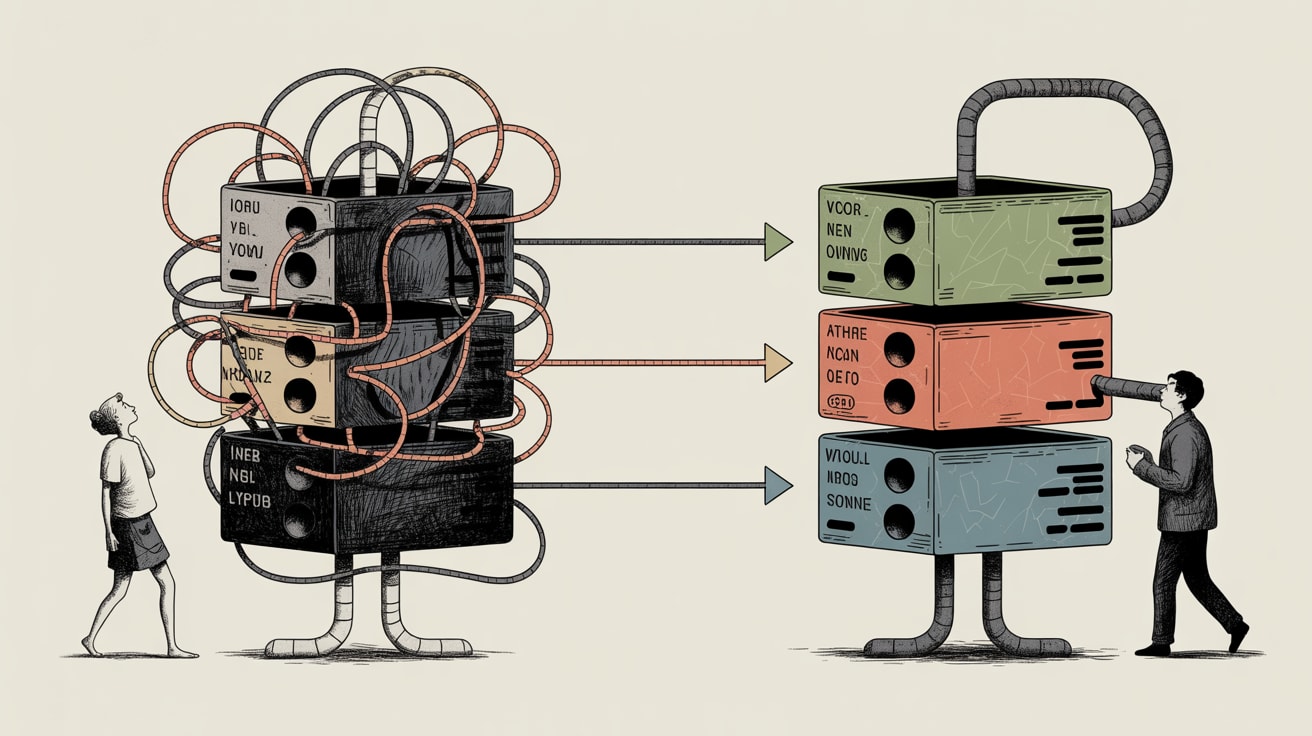

Towards Data Science contributor Yonatan Sason put the issue precisely: unstructured AI code generation produces tightly coupled, monolithic modules where everything talks to everything. In a notification system example he examined, this architecture meant a change to one component silently broke three others — with no dependency map to trace the failure.

Translate that to a data context: your ingestion layer, transformation logic, and reporting outputs are all woven into one undifferentiated script. When your Shopee sales feed changes its schema — and it will — the entire pipeline fails. Your dashboard goes dark. Your monetisation reporting goes with it.

The fix isn’t to stop using AI coding agents. It’s to mandate structured generation: decompose pipelines into independent components with explicit, one-directional dependencies before a single line of agent-generated code is written.

What Production-Ready Actually Means for Data Pipelines

Eivind Kjosbakken’s walkthrough of production-ready code with Claude Code surfaces a distinction that data teams routinely miss: a working prototype and a production-ready pipeline are architecturally different objects, not different versions of the same thing.

Production-ready data code requires error handling at every ingestion boundary, schema validation before transformation, and logging that surfaces failures to the right person automatically. In SEA deployments, this matters more than most regions: you’re often pulling from platforms — Lazada, Grab, LINE OA — with inconsistent API versioning and underdocumented schema changes.

Claude Code and similar agents can generate this scaffolding, but only if you prompt for it explicitly. Left to defaults, agents optimise for functional brevity, not operational resilience. The practical discipline: treat your AI agent like a junior analyst — capable and fast, but requiring a structured brief that specifies failure modes, not just desired outputs.

Visualisation as the First Line of Architecture Audit

Here’s where dashboard practice intersects directly with architecture hygiene. Rhyd Lewis’s piece on graph colouring with Python makes a point that applies well beyond algorithm visualisation: making relationships visible is the fastest way to find problems that logic alone misses.

For data teams, this translates to a concrete practice: before deploying any AI-generated pipeline into production, visualise its dependency graph. Tools like dbt’s DAG view or a simple NetworkX plot of your transformation steps will surface circular dependencies, unexpected coupling, and modules with too many inputs — the exact failure patterns Sason identifies as the black box problem.

In practice, a Manila-based e-commerce brand running weekly GMV dashboards for three regional markets used exactly this approach after an AI-assisted pipeline rewrite introduced silent rounding errors in currency conversion. The dependency graph showed a shared utility function being called inconsistently across four transformation steps. Two minutes of visualisation, forty minutes of remediation — versus what could have been weeks of trust erosion with commercial stakeholders.

Monetisation Infrastructure Needs Modular Code, Not Just Clean Code

For publishers and brands treating first-party data as a commercial asset — audience segmentation products, affiliate attribution layers, programmatic data partnerships — the maintainability tax on AI-generated code is highest precisely where you can least afford it.

Monetisation data infrastructure has stricter SLA requirements than internal analytics. A dashboard going dark is an internal problem. An audience segment pipeline failing during a campaign delivery window is a contractual problem.

The structural answer is the same one Sason advocates for software: enforce single-responsibility modules. Each component of your monetisation pipeline — ingestion, identity resolution, segmentation logic, activation output — should be independently deployable, independently testable, and independently explainable to a non-technical stakeholder.

This isn’t about rejecting AI-assisted development. Teams using Claude Code or similar tools to generate modular components within a pre-defined architecture ship faster and maintain better than teams writing monolithic scripts manually. The discipline is in the architecture brief, not the tool choice.

Key takeaways:

- Require explicit dependency mapping and error-handling scaffolding in every AI coding agent prompt — functional output and production-ready output are not the same specification.

- Visualise pipeline dependency graphs before deployment; graph-based audits catch structural failures that code review misses.

- For monetisation data infrastructure, enforce single-responsibility architecture at the module level — not as a best practice, but as a commercial continuity requirement.

The deeper question for data leaders in SEA’s fast-moving market is this: as AI coding agents get faster and more capable, does your team’s architectural discipline keep pace — or does speed become the justification for the structural debt that eventually stops your most commercially critical pipelines cold?

Sources

Written by

Inkblot GrizzlyCrafting dashboards that tell the truth, and monetisation frameworks that make that truth commercially useful. Turns abstract data assets into revenue-generating products for publishers and brands alike.