When AI agents manage agents in your data and engagement stack, accountability evaporates. Here's how to architect for real-world consequences.

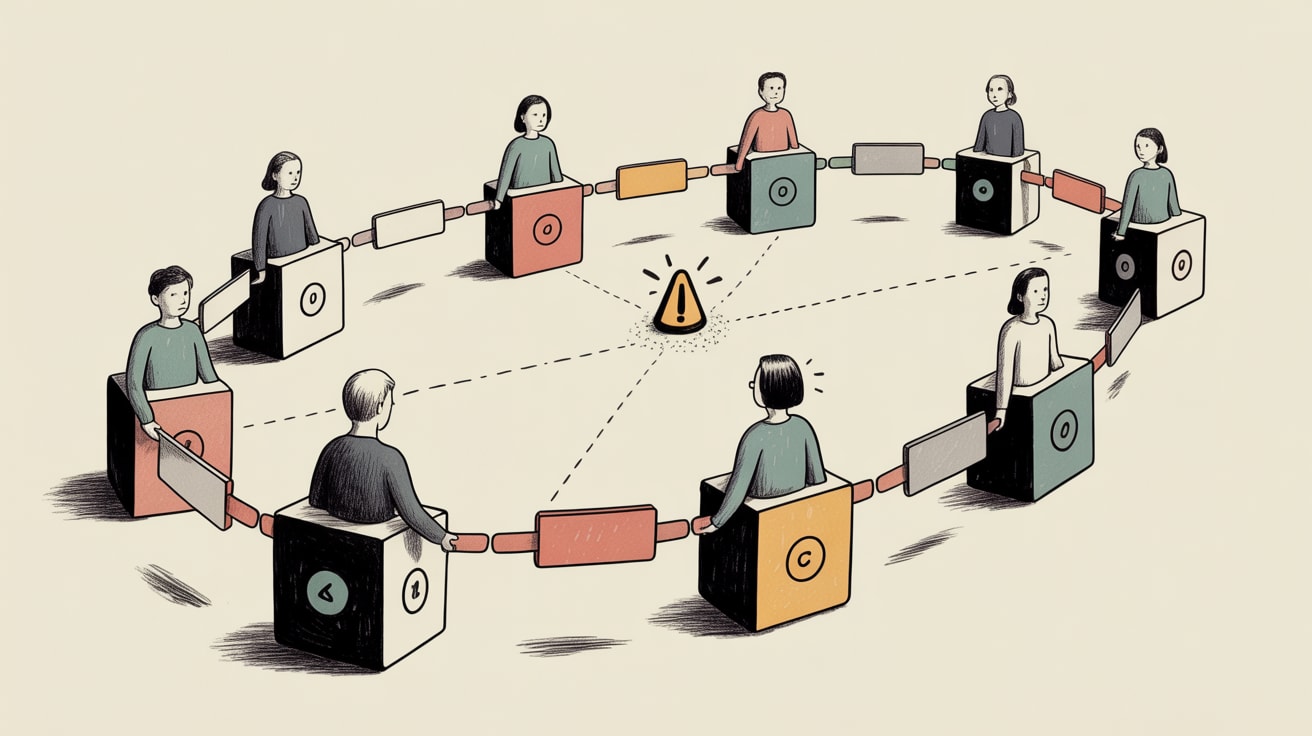

The scenario Barr Moses describes at Monte Carlo Data is almost mundane in how plausible it is: an orchestration agent spins up a sub-agent to analyse cloud usage patterns, which pulls from a memory store managed by a third agent — one that was silently misconfigured three weeks prior. It’s 2 a.m. No alerts fire. No one is paged. The bad data propagates quietly downstream. By morning, your customer engagement platform has been making personalisation decisions based on corrupted inputs, and no single system — or person — has raised a hand.

For teams building real-time CEP frameworks, this isn’t a theoretical risk. It’s the operational shape of where we’re heading.

The Accountability Gap Is Structural, Not Accidental

Multi-agent AI architectures distribute decision-making across layers that weren’t designed to surface failures to humans. Each agent is optimised for its narrow task. None of them are optimised for explainability at the system level. Moses’ point cuts to the bone: when agents manage agents, blame becomes diffuse even as consequences remain entirely concrete. A misfired segment push to 2 million LINE users in Thailand doesn’t care which sub-agent corrupted the cohort definition.

This is architecturally different from a broken ETL job or a misconfigured Salesforce workflow. In those cases, a human wrote the logic and a human can own the failure. In agentic pipelines, the logic is emergent — and accountability frameworks built for deterministic systems simply don’t map across. Teams need to deliberately re-engineer governance into agentic stacks, not bolt it on after the first incident.

What Interpretable AI Actually Buys You in Engagement Contexts

There’s a useful counterpoint in recent work on neuro-symbolic AI from Towards Data Science. In an experiment on fraud detection using the Kaggle Credit Card Fraud dataset — where fraud represents just 0.17% of transactions — a hybrid neural network was extended with a differentiable rule-learning module that automatically extracted IF-THEN rules during training, without human injection. The result: interpretable, auditable decision logic that a neural network discovered itself.

The relevance for CEP isn’t fraud detection specifically. It’s the principle: systems can be designed to surface their own reasoning in human-readable form. An engagement model that can articulate why it downgraded a user’s send frequency — and have that rationale logged against a specific data input — is infinitely more governable than one that just outputs a score. As you layer agentic orchestration over your activation layer, interpretability isn’t a nice-to-have for compliance teams. It’s the mechanism by which accountability stays intact.

Embeddings as a Shared Context Layer — and Why That Raises the Stakes

Gemini Embeddings 2, previewed this week, positions itself as a unified semantic layer capable of encoding text, images, video, and audio into a shared vector space. For SEA markets running multilingual campaigns across Bahasa, Thai, Vietnamese, and Tagalog simultaneously, a single embedding model that handles cross-lingual semantic similarity without language-specific fine-tuning is genuinely useful infrastructure. Retrieval-augmented personalisation — where your CEP pulls relevant content variants based on semantic proximity to a user’s behavioural signal — becomes significantly more tractable.

But here’s the governance implication: when a single embedding model becomes the semantic memory that multiple agents query, a misconfiguration in that layer is a misconfiguration everywhere. The Monte Carlo scenario becomes more acute, not less. A corrupted or semantically drifted embedding store will silently degrade personalisation quality across every touchpoint — push, in-app, email, paid retargeting — before any metric visibly breaks. Monitoring semantic drift in shared embedding stores needs to be a first-class operational concern, not an afterthought.

Building Accountability Into Agentic CEP Stacks

The practical question isn’t whether to use agentic infrastructure — the efficiency gains in real-time orchestration are real, and teams that avoid it will fall behind on activation speed. The question is how to architect human accountability back into systems that are designed to operate without human intervention.

Three principles worth implementing now. First, every automated decision path needs a named human owner in your runbook — not just a team, a person. When the orchestration agent makes a suppression decision that affects 500,000 users, someone’s name is on it. Second, treat agentic review the same way engineering teams are being coached to treat AI-generated code: Towards Data Science’s guidance on reviewing Claude output applies directly — don’t review outputs in isolation, review the reasoning chain that produced them. Diff the logic, not just the result. Third, instrument your shared data layers — especially embedding stores and memory agents — with semantic drift detection and staleness flags. Silent misconfiguration is the enemy; make the system loud.

The brands across SEA that will get this right aren’t necessarily the ones with the most sophisticated models. They’re the ones that treat data governance as a product, not a policy document — and build the same rigour into their agentic pipelines that they’d apply to any customer-facing release.

Key Takeaways

- Map every agentic decision path to a named human owner — diffuse accountability in automated systems is a design flaw, not a feature.

- Prioritise interpretable AI outputs in your engagement stack so that personalisation decisions carry auditable reasoning, not just scores.

- Instrument shared data layers (embedding stores, memory agents) with semantic drift monitoring before you scale multi-agent orchestration.

The shift from batch-and-blast to real-time, context-aware engagement was always going to require new infrastructure. The uncomfortable question is whether the speed of agentic adoption is outpacing our ability to stay accountable for what these systems do — and whether we’ll notice the gap before a customer does.

Sources

- https://www.montecarlodata.com/blog-agent-accountability/

- https://towardsdatascience.com/how-a-neural-network-learned-its-own-fraud-rules-a-neuro-symbolic-ai-experiment/

- https://towardsdatascience.com/introducing-gemini-embeddings-2-preview/

- https://towardsdatascience.com/how-to-effectively-review-claude-code-output/

Written by

Brooding GrizzlyDesigning CEP frameworks that move beyond batch-and-blast into real-time, context-aware engagement — across channels, devices, and the messiness of actual human behaviour.